The rapid growth of artificial intelligence (AI) is undeniable; it is transforming the audiovisual industry, bringing both new opportunities and significant challenges, particularly regarding job security, copyright, and ethical standards. Tasks traditionally reserved for human creativity, such as writing, music composition, and visual concept development, are increasingly being handled by intelligent systems capable of generating original content. This technological evolution is raising profound questions about the future of creative work.

Copyright battles and legislative pressure

A central and highly debated issue is copyright, particularly since AI models are often trained on vast amounts of protected material, such as films, series, and music, without authorization or compensation for rights holders. Current law typically protects only works stemming from human intellect, creating complex questions about works generated with AI assistance.

This environment has led to legal conflicts, as the lawsuit filed by The New York Times against OpenAI for unauthorized use of content. Simultaneously, major technology companies like OpenAIand Google are lobbying for exemptions from current copyright regulations to gain unrestricted access to content they deem necessary for AI training.

In response to this growing influence, more than 400 entertainment figures—including Paul McCartney, Cate Blanchett, and Alfonso Cuarón—signed an open letter to the White House demanding stricter AI regulation and stronger copyright protections.

Labor market disruptions and union response

The audiovisual workforce views AI as a major threat to job stability, prompting significant labor mobilization. This concern was central to the historic 2023 Hollywood strike, where unions like SAG-AFTRA (representing over 65,000 actors) and the Writers Guild of America (WGA) (with 11,000 members) protested studios’ intentions to use AI to digitally replicate actors’ likenesses. This has forced actors to address how best to protect their image rights and artistic identity. Furthermore, voice actors have raised alarms over the unauthorized use of their voices to train algorithms.

Regulatory landscape and ethical guidelines

The increasing use of AI also raises critical issues surrounding personality rights, transparency, and the potential for disinformation through the creation of fake content, including deepfakes.

In Europe, the European Audiovisual Observatory (EAO) has published a legal report, AI in the Audiovisual Sector: Navigating the Current Legal Landscape, examining the intersection of AI with existing regulation, including the General Data Protection Regulation (GDPR) and the newly enacted AI Act. The report analyzes challenges related to authorship, liability, and the threat AI poses to cultural diversity and media pluralism by inadvertently reinforcing biases.

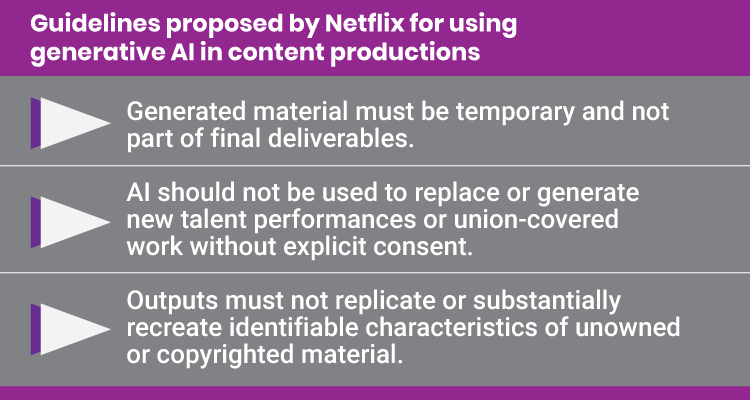

Meanwhile, major streamers are implementing their own internal rules. Netflix has established detailed guidelines for using generative AI in content production, which also apply to «partner vendors». Company emphasizes that these tools should be used 0-‘transparently and responsibly’.

The guidelines specify several critical principles, including:

- Generated material must be temporary and not part of final deliverables.

- AI should not be used to replace or generate new talent performances or union-covered work without explicit consent.

- Outputs must not replicate or substantially recreate identifiable characteristics of unowned or copyrighted material.

Netflix stresses that if partners cannot confidently affirm all these principles, written approval is required before proceeding. The challenge now is the urgent need for ethical guidelines and effective regulations that can protect industry workers while accommodating this irreversible technological process.